MCP SDK downloads hit 97 million per month across Python and TypeScript, with over 10,000 active servers indexed in public registries (Pento, 2025). Most guides explain what MCP does. This one explains how it works — every architectural layer from the host-client-server model to OAuth 2.1 + PKCE, with a real production example.

TL;DR

MCP follows a Host > Client > Server architecture using JSON-RPC 2.0 messaging. It supports stdio for local tools and Streamable HTTP for remote servers. Authentication uses OAuth 2.1 with mandatory PKCE since the November 2025 spec revision. With 97 million monthly SDK downloads and 10,000+ active servers, MCP is the de facto standard for connecting AI to external tools.

What Is MCP’s Architecture?

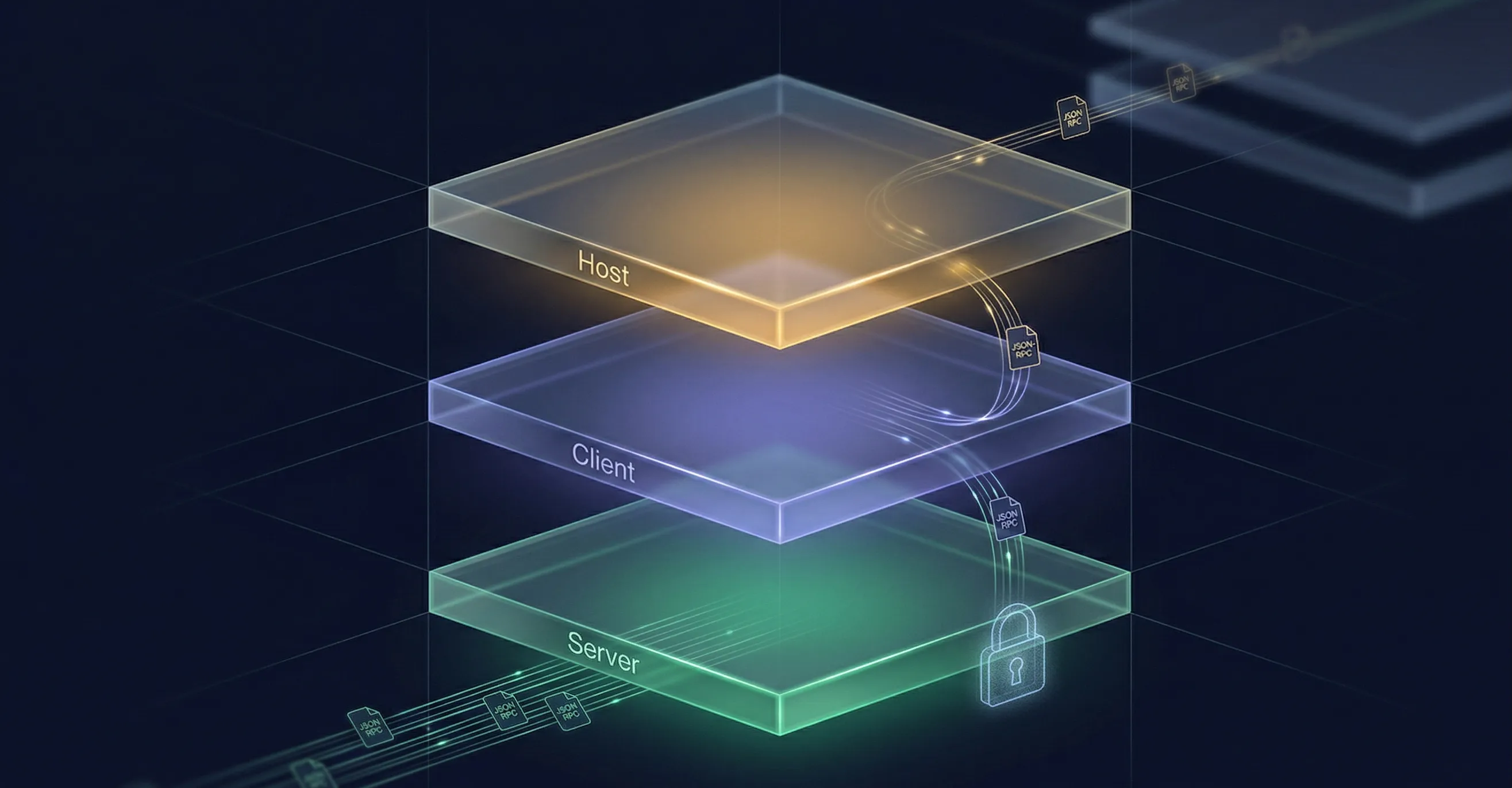

Over 300 MCP clients are now available across the ecosystem (MCP Manager, 2026), all built on the same three-tier architecture. MCP uses a Host > Client > Server model built on JSON-RPC 2.0, where a single host can manage multiple client-server connections at the same time.

The Host is the application your user interacts with — Claude Desktop, ChatGPT, Cursor, or a CLI tool like Claude Code. It runs the LLM, manages the conversation, and contains one or more MCP clients. The Client handles protocol negotiation and message routing between the host and a specific MCP server. Each client maintains a 1:1 connection with one server. The Server is a lightweight program that exposes capabilities — tools, data, prompt templates — through the MCP protocol.

Why separate clients from hosts? Because one host can connect to many servers simultaneously. Claude Desktop might connect to a file system server, a database server, and OpenClaw’s fleet management server all at once. Each connection runs through its own client instance, keeping protocol state isolated. If one server goes down, the others keep working.

This architecture borrows from the Language Server Protocol (LSP) that powers code intelligence in editors like VS Code. But MCP diverges in a key way: LSP assumes a 1:1 local connection between editor and language server. MCP is designed for 1:many connections across both local and remote servers. That distinction drove the transport layer design — MCP needed to work over the network, not just over local process pipes.

How Do MCP Transport Layers Work?

Remote MCP servers grew 4x since May 2025, driven by Streamable HTTP adoption (Zuplo MCP Report). MCP supports two active transports — stdio for local tools with microsecond latency, and Streamable HTTP for remote servers with multi-client support — after deprecating the older SSE transport in the June 2025 spec revision.

stdio runs an MCP server as a child process on your local machine. The client writes to the server’s STDIN and reads from STDOUT. There’s zero network overhead — no HTTP, no TLS, no DNS lookup. It’s the right choice for file system access, local databases, and any tool where the user controls the machine. The tradeoff? One client, one server, one machine. You can’t share a stdio server across users or access it remotely.

Streamable HTTP runs the server as an independent process that accepts connections from multiple clients over a single HTTP endpoint. It replaced the older Server-Sent Events (SSE) transport, which required two separate endpoints — one for client requests and another for server responses. Streamable HTTP simplifies this to a single URL. It’s what powers every remote MCP server, including OpenClaw’s endpoint at https://openclaw.direct/mcp.

Which should you choose? If the person using the AI client also controls the machine the server runs on, use stdio. If they don’t — or if multiple clients need to connect — use Streamable HTTP. The ecosystem is shifting toward remote: company-operated MCP servers grew 232% between August 2025 and February 2026 (Bloomberry).

What Are MCP’s Core Primitives?

The top 50 MCP servers generate a combined 622,000+ monthly searches worldwide (MCP Manager, 2026). That demand is driven by three standardized primitives — Tools, Resources, and Prompts — each discoverable at runtime via tools/list, resources/list, and prompts/list methods.

Tools are executable functions. Each tool has a name, a human-readable description, and a JSON Schema defining its input parameters. When Claude asks “what can you do?” of an MCP server, it gets back a structured list of tools with enough metadata for the model to decide when and how to call them. Tools are model-controlled — the AI decides when to invoke them based on the conversation.

Resources provide read-only access to data: file contents, database views, dashboard snapshots, API responses. Unlike tools, resources are application-controlled — the host app decides which resources to expose and when to fetch them. Think of resources as context the application feeds to the model without the model asking.

Prompts are reusable templates for common workflows. A code review prompt, a data analysis template, a report generator — these are user-controlled, selected explicitly by the human. They aren’t called automatically by the model or the app.

This three-way control model is deliberate. It keeps the boundaries clear: the model acts through tools, the app provides context through resources, and the user triggers workflows through prompts. No single party controls everything.

How Does MCP Authentication Work?

The November 2025 spec revision made OAuth 2.1 with PKCE non-negotiable for all public-facing remote MCP servers. That’s not a suggestion — the spec mandates the S256 challenge method and forbids the implicit grant flow entirely. The June 2025 revision formally split MCP servers from authorization servers, enabling enterprise SSO integration through standard OAuth infrastructure.

Why PKCE specifically? CLI tools and desktop apps can’t securely store a client secret. PKCE (Proof Key for Code Exchange, RFC 7636) solves this. The client generates a random code_verifier, hashes it into a code_challenge, and sends only the hash in the authorization request. To exchange the authorization code for tokens, the client sends the original verifier. Even if someone intercepts the authorization code, they can’t use it without the verifier — which never leaves the client.

The flow also uses Protected Resource Metadata (RFC 9728) for server discovery. When a client first connects to an MCP server, it fetches metadata to find the associated authorization server — no hardcoded auth URLs needed. This is what enables a tool like Cursor to connect to any MCP server without prior configuration of the auth endpoint.

What Is MCP’s Security Model?

Astrix Security’s 2025 audit of 5,000+ MCP servers found that servers without proper input validation were vulnerable to tool-poisoning attacks — prompting the spec’s stricter validation requirements. MCP enforces security at three layers: transport, consent, and validation.

Transport security requires HTTPS for all Streamable HTTP connections. Data in transit is encrypted via TLS. Local stdio connections skip this — they run within a single machine, so network encryption isn’t relevant.

User consent means the AI can’t call tools silently. Before a tool executes, the user sees the tool name, its description, and the proposed arguments. You approve or reject each call. This prevents scenarios where a compromised model tries to exfiltrate data through a tool call you didn’t expect.

Input validation enforces JSON Schema on every tool call. The server defines what arguments each tool accepts, and the protocol validates inputs before execution. This prevents injection attacks where malformed arguments might trick a tool into unintended behavior. The state parameter (a cryptographically random value stored before the auth redirect) prevents CSRF attacks during the OAuth flow.

In practice, the third layer — input validation — is where most production issues surface. Schema enforcement catches obvious problems, but AI agents using MCP servers can still craft arguments that are technically valid but semantically unexpected. A robust MCP control plane adds governance and audit logging on top of these protocol-level protections. If you’re building an MCP server, invest in argument validation beyond what the schema requires. Check ranges, enforce allow-lists, and log every tool invocation.

How Does Tool Discovery Work?

SkillsIndex tracks 4,133 company-operated MCP servers as of early 2026, up 873% from 425 in mid-2025 (SkillsIndex). Every one of those servers is discoverable at runtime through the same protocol flow.

When a client connects, it sends an initialize request. The server responds with its capabilities — which primitives it supports (tools, resources, prompts). The client then calls tools/list to get a structured inventory of every available tool: name, description, and JSON Schema for arguments. No documentation needed. No API explorer. The client learns what the server can do by asking it.

This runtime discovery is what makes MCP composable. An AI host can connect to five servers and immediately know what 50+ tools are available. It can reason about which tool to use based on the descriptions and schemas — without any hardcoded knowledge of any specific server.

Real Example: OpenClaw’s MCP Server

OpenClaw’s production MCP server at https://openclaw.direct/mcp puts every architectural concept above into practice. It uses Streamable HTTP transport, authenticates via OAuth 2.1 + PKCE, and exposes 11 tools for AI fleet management — connecting from any MCP client takes under two minutes.

Here’s what the tool discovery looks like in practice. When Claude Code calls tools/list on OpenClaw’s server, it gets back 11 tools for AI fleet management: list_employees, show_employee, create_employee, update_employee, fire_employee, rehire_employee, put_employee_on_leave, return_employee_from_leave, employee_wellness_check, employee_timesheet, and list_plans. Each includes a description and argument schema that Claude uses to decide when to invoke them.

The transport choice was deliberate. OpenClaw’s MCP server needs to handle connections from Claude, ChatGPT, Cursor, and Windsurf simultaneously — which rules out stdio. Streamable HTTP also enables load balancing and horizontal scaling behind standard HTTP infrastructure. The 2026 roadmap’s planned stateless transport improvements will make this even more straightforward — see the latest spec developments for details.

Want to try it yourself? The setup is a one-liner for Claude Code:

claude mcp add --transport http openclaw https://openclaw.direct/mcpFor a step-by-step walkthrough, see our guide on how to set up MCP with Claude. Or browse real-world MCP examples for workflow inspiration. You can also schedule cron jobs for your AI agent once your MCP connection is live.

Frequently Asked Questions

How does MCP differ from a REST API?

MCP is a protocol layer on top of APIs. While REST requires custom integration per service, MCP provides standardized tool discovery, schema validation, and authentication across 10,000+ servers using JSON-RPC 2.0 (Pento, 2025). Build one MCP server, and it works with every compatible client.

Is MCP secure enough for production use?

Yes. The November 2025 spec mandates OAuth 2.1 + PKCE for all remote servers, with transport-layer TLS, user consent for tool execution, and JSON Schema input validation. Major deployments at Block, Bloomberg, and Amazon validate production readiness (MCP Manager).

Should I use stdio or Streamable HTTP?

Use stdio for local tools where the user controls the machine — zero latency, simple setup. Use Streamable HTTP for remote servers, multi-client scenarios, or cloud deployments. Remote MCP servers grew 4x since May 2025 (Zuplo).

What programming languages support MCP?

Official SDKs exist for Python and TypeScript, with 97 million combined monthly downloads. Community SDKs cover Go, Java, Rust, C#, and Ruby (Pento, 2025). The protocol uses JSON-RPC 2.0, so any language that handles JSON can implement it.

Who governs the MCP specification?

MCP was created by Anthropic in November 2024 and donated to the Agentic AI Foundation under the Linux Foundation in December 2025. Gartner predicts 75% of gateway vendors will have MCP features by 2026 (Pento). Development is now driven by Working Groups and Spec Enhancement Proposals.

Key Takeaways

- Architecture: Host > Client > Server model with JSON-RPC 2.0 messaging and 1:many connections

- Transports: stdio for local tools (microsecond latency), Streamable HTTP for remote servers (multi-client, production-grade)

- Primitives: Tools (model-controlled), Resources (app-controlled), Prompts (user-controlled) — all discoverable at runtime

- Auth: OAuth 2.1 + PKCE is mandatory for remote servers since November 2025

- Scale: 97M monthly SDK downloads, 10,000+ servers, backed by Anthropic, OpenAI, Google, and Microsoft

See MCP architecture in action — connect to OpenClaw’s MCP server in under two minutes and manage your AI fleet from Claude, ChatGPT, Cursor, or any MCP client. Sign up free to get started.